Data agents for marketers that actually work

Our AI automates insights from dozens of critical data sources with

full transparency and tested 95%+ consistency

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

.png)

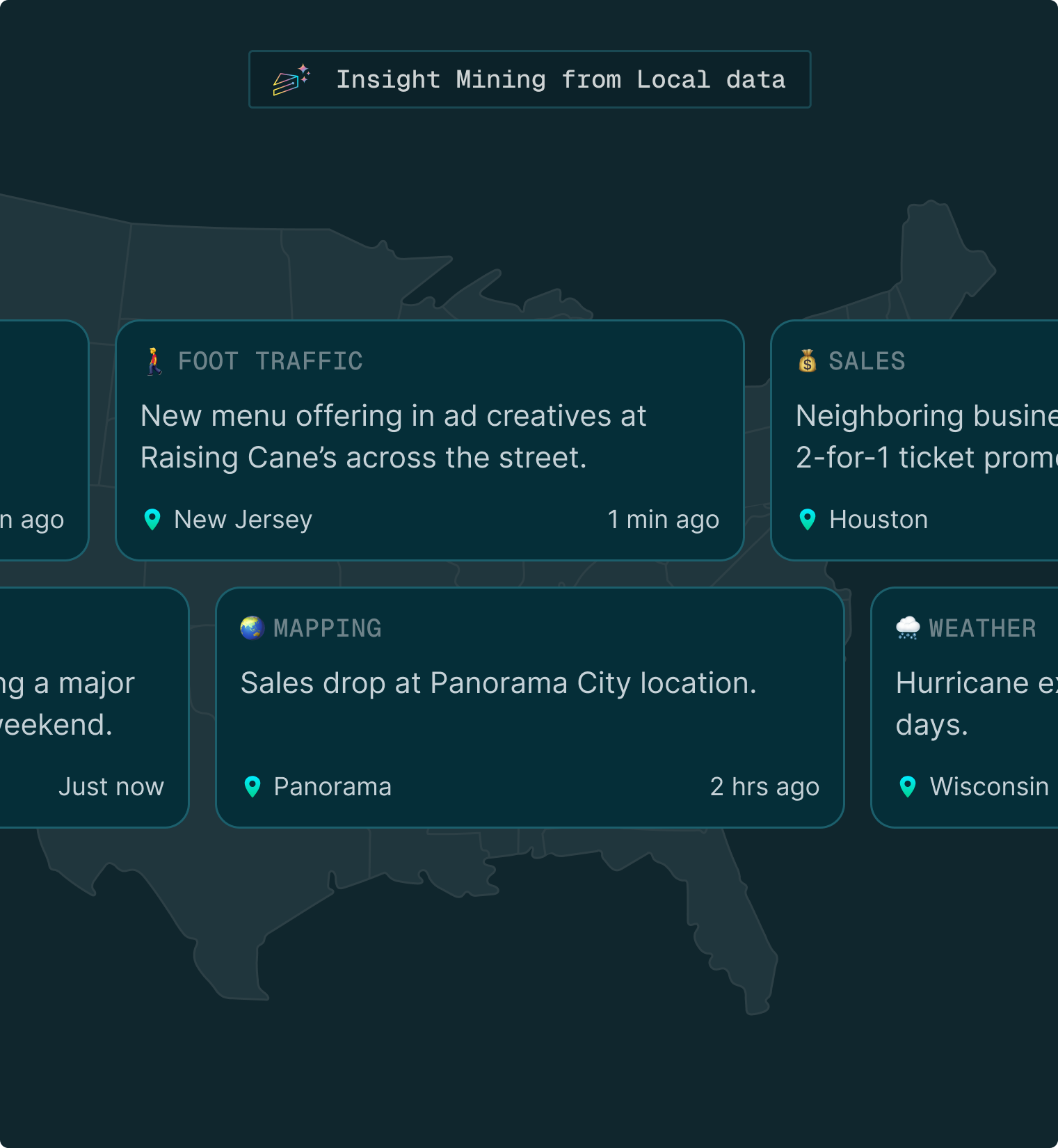

One home for KPI reporting and local data insights

Turn weeks of analytics work -- repetitive reporting, custom data wrangling, basic insights generation -- into fully automated reports in hours.

.svg)

Automate KPI reporting across all channels:

Monitor Google, Meta, and other ad platforms in real-time. Get instant anomaly alerts and turn weeks of manual reporting into days of streamlined collaboration.

.svg)

.svg)

Competitive intelligence on autopilot:

Gauge your competitive performance daily at a hyperlocal level, without exhausting internal analyst resources.

.svg)

.svg)

.svg)

.svg)

Monetize your data without sharing it:

Let customers query your proprietary survey data through AI while keeping the raw data secure. No more manual crosstabs or custom data requests.

Overall, wow. This is an incredible amount of information that is packed into this, and so much of it can be actionable.

I love how easy it is to read through and pull information from the sections... the combination of geographic maps, traffic trends, and channel mix graphs also tells helpful stories without being “too much”.

The way Datacakes uses AI is awesome. I used to spend hours (literally) crafting and tweaking SQL/Python to extract learnings. Now I have that time back!

Even better, it pulled out themes that I didn’t even know existed. That made for some compelling show and tell, and helped us evaluate customer messaging strategies.

.png)

.png)

Dedicated data solutions, purpose-built for:

Automated KPI

performance reporting

+ anomaly detection

Syncing insights from multiple channels creates reporting chaos, especially when executed in silos. AdCake's AI agents eliminate the manual grunt work and faciliate a collaborative, more cohesive outcome.

.svg)

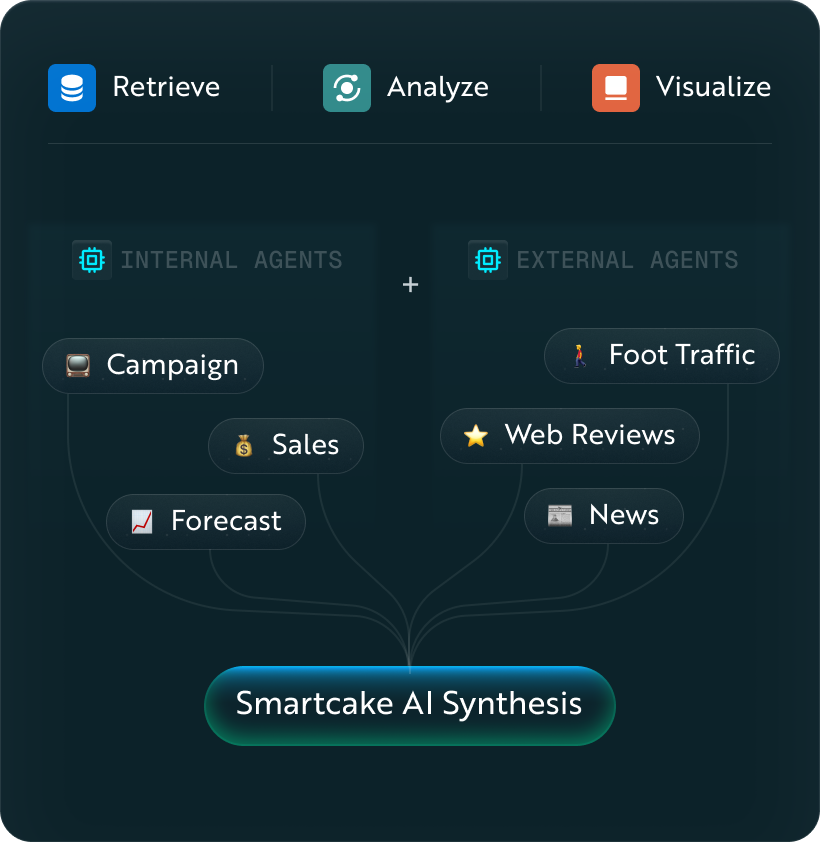

Apply local data sources for superior performance insights

Hyperlocal data exists but requires too many internal resources to implement. SmartCake AI automates the ingestion, delivering superior intelligence about your closest competitors.

Let customers talk to your survey data

You run a proprietary panel that customers want to explore, but you can’t hand over raw data. You’re swamped with custom analysis requests. Our AI Surveys agent handles all those customer queries so you don’t have to.

Get started today, talk to an expert.

No heavy data integrations. Reliable AI automation.

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.svg)

.png)

.png)

.png)

.png)

.png)